Docker Security - What I Learned From Creating My First Full-Stack App After University

A recent University Computer Science Graduate, I enjoy experimenting and learning by doing in my free time. This blog is a way for me to showcase skills and topics that I have learnt and encountered.

The One Thing That University Didn't Teach 🤔

Docker. It's everywhere. From hosting simple applications to running the micro-services required by your favorite streaming platforms. This magical technology unfortunately did not make the cut for the University course I attended. As such, it has been my hobby to containerize and manage small applications in the effort to help me learn more about this technology. I have personally run into each of following Docker security conundrums and have learnt by developing and experimenting, as most junior developers should.

Problem #1 - Vulnerabilities

After developing my first full-stack app with Node.js, I naturally decided that I would bundle and containerize the application to allow for better portability and distribution. So the first thing I wrote, much like many other developers, is the conventional FROM node.

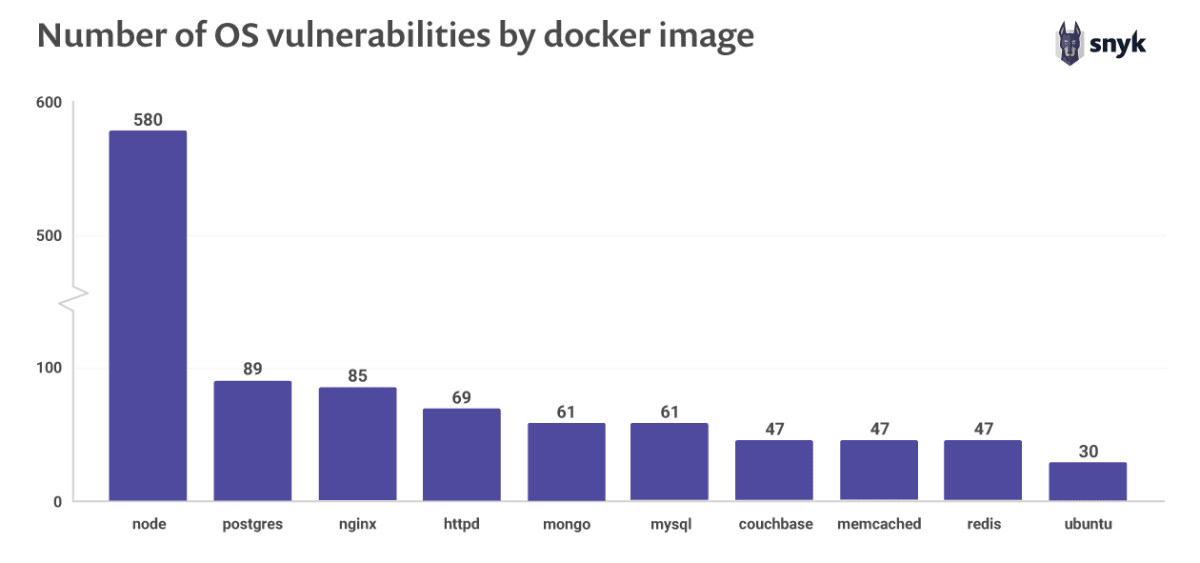

Did you see the problem? No? Neither did I at first, but the people at Snyk did. By using the node base image, I had inadvertently inherited a large number of pre-existing vulnerabilities found within the base image.

Solution ✔

By changing to a more secure base image, such as ubuntu, instantly the number or vulnerabilities drop dramatically. Taking the node image for example, by simply building Node from source within a Ubuntu 20.04 base, the number of vulnerabilities shoot down from nearly 600, to a small amount of only 23 (as report by Snyk).

This can be achieved simply, using the following as a baseline for your Dockerfile:

FROM ubuntu:latest

# Install Node.js and dependencies

RUN apt-get update -yq \

&& apt-get install curl gnupg -yq \

&& curl -sL https://deb.nodesource.com/setup_14.x | bash \

&& apt-get install nodejs -yq

# Copy and Setup App

WORKDIR /app

COPY . /app

RUN npm install

# Expose Port and Run

EXPOSE 5000

CMD ["npm", "start"]

Problem #2 - Execution Within A Container

The next problem that arises is the use of the Root user within a Docker container. By default, if a user is not specified, commands executed within a container (as well as running applications within the container) are run as root. This opens up vulnerabilities that could potentially give backdoor root access into the host system 🙅♂️. You don't need to be a security expert to see how bad this can be.

Solution ✔

By utilizing the USER directive, you can easily create and swap to a less-privileged user to execute the application. It is best to do this near the end of the Dockerfile, allowing for the application to be set up correctly (without any file permission errors). Using the Dockerfile described previously, this would look like the following:

...

# Copy and Setup App

WORKDIR /app

COPY . /app

RUN npm install

# Expose Port

EXPOSE 5000

# Create and Change to User 'app' and Run

USER app

CMD ["npm", "start"]

Problem #3 - Saving Files to Host

So you've used a more secure base image and swapped to a less-privileged user, but you want to write data to the host to persist across the container life-cycle, such as configuration data? No problem, you just specify a Bind Mount or Volume by using the docker run -v command. Simple.

But wait. We've swapped to a less-privileged user that doesn't have write permission to the host machine. How do we perform this simple operation now, without risking the security of the application? This was a question that I was stuck on for a good deal of time when containerizing my application. Until I found a helpful answer on Stack Overflow.

Solution ✔

This answer, written by Dimitris, kindly explains that the docker container should fix any file permissions for mounted directories, before running the application, and points towards the implementation of the Reddis Docker container. From instecting this repository (along with some further reading), it became clear the power of the ENTRYPOINT command. By using the entrypoint of the Dockerfile to run a script, instead of running the application, the container can be started with directories mounted (make note, this is key), with operations being performed immediately before application start.

Say you have a configuration folder within the container at /app/config, the Dockerfile to achieve this, based on the previous ubuntu image generated, is as follows:

FROM ubuntu:latest

# Add User/Group 'app'

RUN groupadd -r app \

&& useradd -r -s /bin/false -g app app

# Install Node.js and dependencies

RUN apt-get update -yq \

&& apt-get install curl gnupg gosu -yq \

&& curl -sL https://deb.nodesource.com/setup_14.x | bash \

&& apt-get install nodejs -yq \

&& chown -R app:app /app

# Copy and Setup App

WORKDIR /app

VOLUME /app/config

COPY . /app

RUN npm install \

&& chmod +x docker-entrypoint.sh

ENTRYPOINT ["./docker-entrypoint.sh"]

EXPOSE 5000

CMD ["npm", "start"]

To note, the new internal user must be created at the beginning of the file, using the base image OS's way of creating a user, to ensure permissions are handled successfully. Also, gosu is installed to handle the step-down to the less-privileged user. The contents of the docker-entrypoint.sh are found below:

#!/bin/bash

# Exit if a process exits with an error code

set -e

# if 'CMD' Directive in Dockerfile begins with 'npm'

if [ "$1" = 'npm' ]; then

# Make Owner of Configuration Folder/Files Newly Created User

chown -R app:app /app/config

# Optional

echo "Finished Fixing Permissions"

# Change User to 'app' and Run 'CMD' in Dockerfile

exec gosu app "$@"

fi

# Change Process to PID 1 for Monitoring

exec "$@"

Breaking down the file above, the following functionality is achieved:

- Checks if the

CMDdirective within the Dockerfile begins with 'npm'. This allows for the potential of different flows for different environments, e.g.DevelopmentorProduction - Changes the now mounted configuration folders' permissions to the 'app' user we created earlier, enabling write permissions to the mounted host folder (remember, the folder was mounted within the Dockerfile. This script executes when the container has just started, not on build or extraction)

- Uses

gosuto change user to that of 'app' and execute the Dockerfiles'CMDdirective as this user (hence, the application is run as 'app')

By using the docker-entrypoint.sh script, the mounted configuration folder has it's ownership changed to the created user, with the application run as said user. This allows the application to write files to the configuration folder to the host machine, without any permissions errors. Allowing for the storage of vital data, without compromising the security of the host/Docker container.

The Takeaway

There are may ways in which one can improve the security of Docker containers, for the benefit of both the host and the container itself. These are only the initial steps anyone should take to help lock-down their container, with a main point to ensure security being enforcing correct security guidelines when developing the application itself. The container could be the Fort Knox of containers, yet with application code not being developed securely, security risks are still a very big possibility.